It is also good to know how far we are from the currently known ceiling (exponent) on the speed of matrix multiplication. In a virtuous feedback cycle, I wonder if this advance could lead to a faster deep learning algorithm itself.

I was also wondering if a regular/exhaustive computer search could find a similar optimization. Thank you for explaining the practical impact of the AlphaTensor discovery! It was not clear from the news why this was useful. For more, see this blog post by Adam Goucher. While AlphaTensor’s result implies a faster non-galactic algorithm for matrix multiplication than Strassen’s algorithm, with an exponent of \( \log_4 47 = 2.777\) as compared to Strassen’s \( \log_2 7 = 2.807\), the best known asymptotics for any non-galactic algorithm for matrix multiplication is based on Smirnov’s 3x3圆 matrix multiplication algorithm, with an exponent of 2.774. You can leave a response, or trackback from your own site.ģ5 Responses to “Postdocs, matrix multiplication, and WSJ: yet more shorties” You can follow any responses to this entry through the RSS 2.0 feed. On Friday, October 7th, 2022 at 11:01 am and is filed under Announcements, Complexity. “It’s become common knowledge that when Chinese students or postdocs come to the U.S., they can’t say they’re doing quantum computing,” says Scott Aaronson, director of the Quantum Information Center at the University of Texas, Austin. Meanwhile, American academics say it’s gotten harder for Chinese students to obtain visas to conduct quantum research in the U.S. Unfortunately paywalled, but includes the following passage:

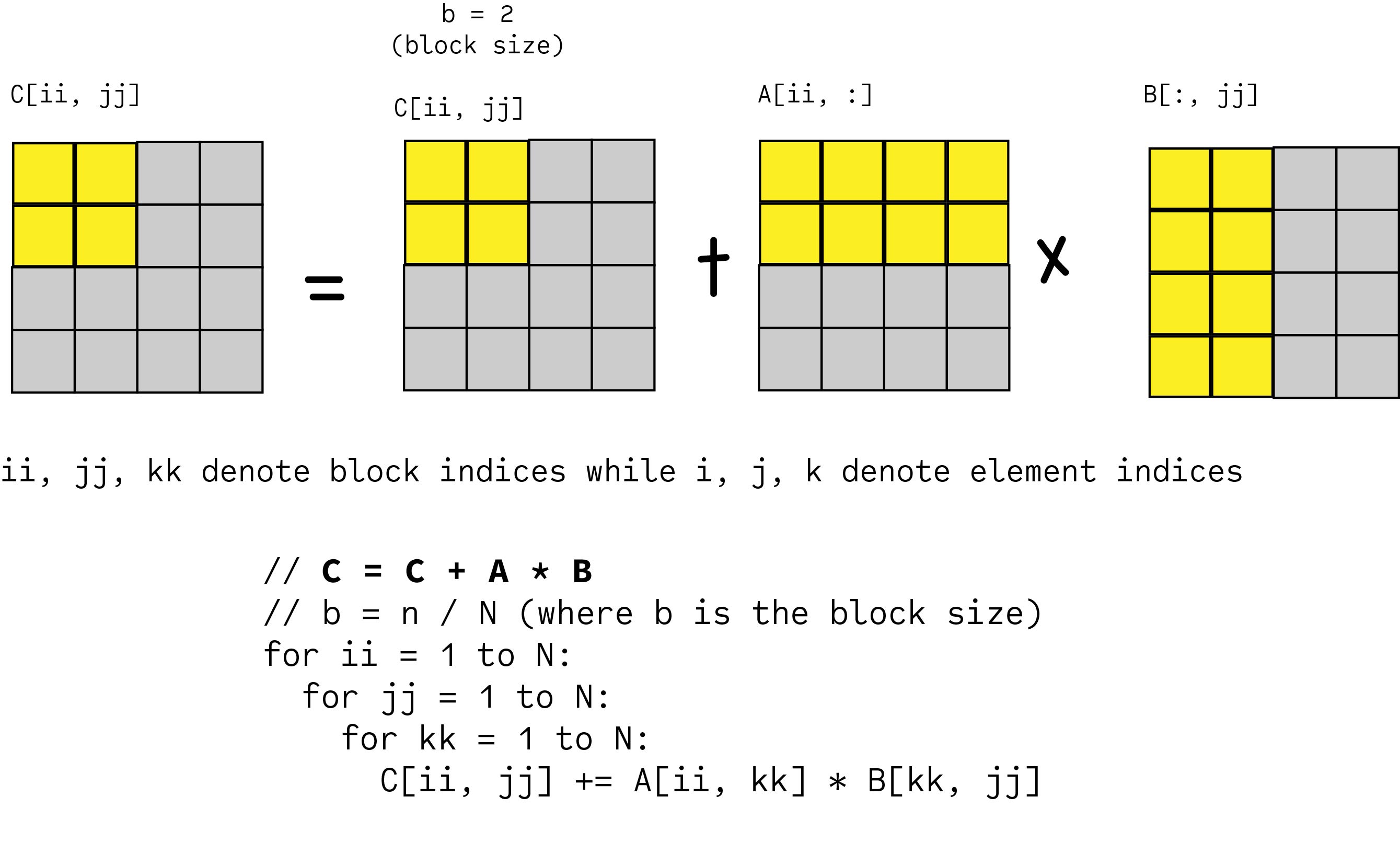

This morning, the Wall Street Journal published an article by Karen Hao about competition between China and the US in quantum computing. Of course, that was before the deep learning revolution. The response I got at the time was that it was hopeless, since the search space was already too huge. Way back in 2007, I gave a talk at MIT CSAIL’s “Wild and Crazy Ideas Session,” where I explicitly proposed to use computer search to look for faster algorithms for 4×4 and 5×5 matrix multiplication. But it does asymptotically improve over Strassen’s O(N 2.81) algorithm from 1968, conceivably even in a way that could have practical relevance for multiplying hundreds-by-hundreds or thousands-by-thousands matrices over F 2. Since I’ve seen confusion about the point on social media: this does not improve over the best known asymptotic exponent for matrix multiplication, which over any field, still stands at the human-discovered 2.373 (meaning, we know how to multiply two N×N matrices in O(N 2.373) time, but not faster). There are other improvements for other matrix dimensions, many of which work over fields of other characteristics. This beats the 49=7×7 that you’d get from Strassen’s algorithm. ( See here for the Nature paper.) More concretely, they’ve used AI to discover (among other things) an algorithm for multiplying 4×4 matrices, over finite fields of characteristic 2, using only 47 scalar multiplications.

The big news in AI and complexity theory this week was DeepMind’s AlphaTensor, and its automated discovery of new algorithms for matrix multiplication. The deadline is December 1 you apply through AcademicJobsOnline rather than by emailing me as in past years. And Nick, Matteo, and I are jointly seeking postdocs to start in Fall 2023! Please check out our call for applications here.

I’m proud to say that Nick Hunter-Jones and Matteo Ippoliti-both of whom work at the interface between quantum information science and condensed-matter physics (Nick closer to the former and Matteo to the latter)-have joined the physics faculty at UT Austin this year.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed